AUV Case Study: Marine Roboticists are Turning AUV Sight into Perception

Scientists Arjuna Balasuriya (bottom right) and Camille Monnier (top right) along with staff from Teledyne Marine wait after deploying AutoTRap Onboard™ for an ANTX mine hunting test. Source: Teledyne

In 2018, marine robotics scientist Arjuna Balasuriya was at the Advanced Naval Technology Exercise (ANTX) in Newport, Rhode Island, testing new software for an autonomous underwater vehicle (AUV). In the past, his AUV research had meant long ocean cruises in rough choppy waters, but for the moment, he was analyzing his algorithms from a comfortable dry seat on land.

Balasuriya and his team from Charles River Analytics were developing an app for the AUVs made by Teledyne Gavia, which could navigate on their own in the oceans to take sonar surveys of the seafloor. But to be truly autonomous, they needed to automatically detect and recognize objects of interest — anything from mines to airplane black boxes to pipelines — so they could do things like decide to reroute for a closer inspection.

Balasuriya recognized that this was a sub-problem from the classic domain of machine learning and computer vision, where Charles River Analytics possessed experience from work on numerous U.S. military and government contracts. When Teledyne expressed interest in finding solutions to this problem in 2015, Balasuriya thought to himself: why not us?

Now, Charles River is releasing a commercial software product, AutoTRap Onboard, that integrates into Teledyne’s Gavia line of AUVs to enable real-time detection and recognition of objects of interest to marine engineers and scientists. The Charles River team first tested and qualified the AutoTRap Onboard system on a Teledyne Gavia in the North Sea off Belgium in 2018.

"The sea state can be really choppy,” Balasuriya said, recalling his cruises on the North Sea. “You have to tie your laptop down onto a pole or some structure, and you have to tie yourself down or you will be rolled and thrown out.”

Gavia AUVs look like yellow submarines a few meters in length and 50 to 130 kilograms in weight. They can perform ocean surveys with advanced equipment like long-range side scan sonar, highly useful for commercial, defense, and scientific purposes.

A Teledyne Gavia prepared for testing with AutoTRap Onboard™ at Ashumet Pond in North Falmouth, Massachusetts. Source: Teledyne Marine.

A Teledyne Gavia prepared for testing with AutoTRap Onboard™ at Ashumet Pond in North Falmouth, Massachusetts. Source: Teledyne Marine.

When mounted to the belly of a Gavia vehicle, the sonar creates an image roughly 100 meters wide of the seafloor below. Images from different passes by the vehicle can be stitched together to provide a comprehensive picture or survey of the seafloor.

Surveys are performed for all sorts of reasons, such as sweeping a port or contended ocean territory to detect hidden mines. Companies that provide insurance for shipping containers can do surveys to search and find lost containers, verifying container loss claims. Pipelines to offshore oil wells, which can run hundreds of kilometers, can be located by surveys and inspected using more detailed imaging sensors, such as high-frequency sonar or electro-optics, which can also be installed on AUVs.

Before the advent of software like AutoTRap Onboard, human analysts had to painstakingly inspect survey data to find objects of interest. Now, object detection and recognition can be done automatically.

However, due to computational demands, many object detection solutions are designed to be run only after an AUV has returned to the surface and hand-delivered its data back to its operators. The AUV can effectively only play fetch. For an AUV to act on its survey data, such as by returning to an object of interest for closer inspection, its operators must perform costly and physically demanding re-deployments.

“Launching and recovering an AUV is not a fun thing to do,” Balasuriya said. “It’s rocking, and you’re trying to get this huge thing into the boat. It’s risky.”

To address this problem, AutoTRap delivers real-time performance on the embedded hardware of the Gavia. As soon as it finds an object, AutoTRap sends a message to other onboard AUV software so it can (for example) reroute for a closer pass. On an AUV equipped with an acoustic broadband transmitter, AutoTRap can help pass object detections in real-time to a mission planner on the surface.

Because of the wide range of objects of interest (mines, pipelines, shipping containers) Balasuriya and his team needed AutoTRap’s object recognition capability to be versatile. To meet this need, they designed an object detection model that implements recent advances in deep learning object detection and representation. This model can be custom trained by CRA engineers to recognize an individual customer’s targets of interest. Camille Monnier is the computer vision specialist who leads the development of AutoTRap’s sonar data processing model.

“Sonar images are interesting and very noisy, very annoying to deal with,” he said. “If you’re not familiar with them, they have some interesting aspects which make them very challenging to train a classifier/detector on.”

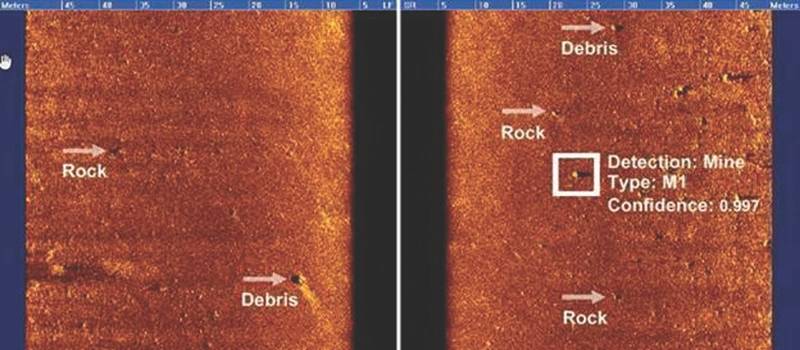

Sonar data reveals the difficulty of performing object detection—the sea floor exhibits an abundance of naturally occurring features that look similar to man-made objects. Source: Teledyne Marine & Charles River Analytics

Sonar data reveals the difficulty of performing object detection—the sea floor exhibits an abundance of naturally occurring features that look similar to man-made objects. Source: Teledyne Marine & Charles River Analytics

Part of the difficulty of performing object detection on sonar images is the limited amount of data available to train a deep learning solution. Well-known deep learning algorithms such as the YOLO convolutional neural network require training on enormous amounts of target object data (such as cats or human faces) to be able to accurately recognize those objects in images. Optical cat images for network training are available in abundance; acoustic sonar images of target objects are not.

With its unique object detection algorithms—known as automatic target recognition (ATR) algorithms in the mine hunting community—AutoTRap Onboard has demonstrated excellent detection rates and false positive rates on test targets in the North Sea and in other marine environments, like the North Atlantic off Iceland, where it has identified truncated conical objects on a rocky volcanic seafloor at depths of 10-30 meters with a 90% probability.

February 2026

February 2026